Object Size Measurements

The following list describes measurements relating primarily to object size as measured from a binary image. These measurements are typically based on a cross-sectional or plane view. For a non-calibrated system, measurements are expressed in number of pixels; for a calibrated system, they expressed in units of measure, e.g., microns. ![]() Click to display measurements.

Click to display measurements.

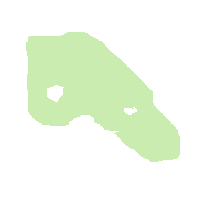

Area

The area is the number of the pixels detected within the boundary of an object (excluding holes).

Filled Area

The total area enclosed by the boundary of the object, including holes.

Breadth

The estimated average cross-section of a long thin object.

Does not yield accurate information when applied to round or rectangular objects with a small aspect ratio (e.g., 1.0 to 2.0).

| Breadth ~ 0.25 * (PERIMETER - (PERIMETER ^ 2 - 16 * AREA) ^ 0.5) |

Filled Diameter

The effective circular diameter computed from its filled area.

This is used in the case that the outer diameter is required for an object which has one or more holes. Another use is to compare the inner and outer diameters of an object with a single hole. This measurement can be combined with the Hole Diameter by creating a Custom Object Measurement.

| Filled Diameter = 2 * ( (AREA / PI ) ^ 0.5 ) |

Width

Object's width: the maximum extent in the x-dimension.

Also called the Horizontal Feret Diameter. Width can be averaged with height to give an estimate of object diameter, especially if the objects are circular. The difference between this estimate of Diameter and the Filled Diameter (which is estimated from Filled Area) can be used to give a shape factor index relating to eccentricity while the ratio of these quantities can be used as an estimate of horizontal-to-vertical aspect ratio.

Height

Object's height: the maximum extent in the y-dimension.

Also called the Vertical Feret Diameter. Height can be averaged with Width , (see above) to give an estimate of object Diameter, especially if the objects are circular. The difference between this estimate of Diameter and the Filled Diameter (which is estimated from Filled Area) can be used to give a shape factor index relating to eccentricity; the ratio of these quantities can be used as an estimate of horizontal-to-vertical aspect ratio.

Hole Area %

Total area of holes in an object, as a percentage of the filled area.

Hole Count

Number of holes in an object. (Holes are filled before counting)

Hole Diameter

Effective circular diameter of an object (estimated from its Hole Area).

This measurement can be combined with the Filled Diameter by creating a Custom Object Measurement.

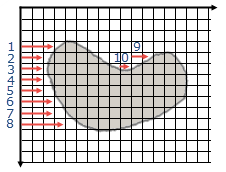

Intercepts 0°

The number of times a transition from background to foreground (not vice versa) occurs in the horizontal direction (0° degrees) for the entire object. In other words, it is equal to the number of times the neighborhood configuration occurs in an object, where B is a background pixel and F is a foreground pixel.

Note: These measurements are at the object level, but in the Statistics display, the Total values of these measurements are given, to describe the measurements at the Field level.

Intercepts 45°

The number of times that the neighborhood configuration occurs in a blob, where F is a foreground pixel, B is a background pixel and a dot can be any pixel value.

Intercepts 90°

The number of times that the neighborhood configuration occurs in a blob, where F is a foreground pixel and B is a background pixel.

Intercepts 135°

the number of times that the neighborhood configuration occurs in a blob, where F is a foreground pixel, B is a background pixel and a dot can be any pixel value.

Length

Estimated curvilinear length of a long thin object.

Does not yield accurate information when applied to round or rectangular objects with a small aspect ratio (e.g., 1.0 to 2.0).

| Length ~ 1/4 (PERIMETER + (PERIMETER ^ 2 - 16 * AREA) ^ 0.5) |

Endpoint Length

The distance between the endpoints of a line.

Used when measuring elongated objects that have been processed by Skeletonize![]() The "Ultimate-thin", recursively performs binary image thinning on the displayed binary image until the ultimate skeleton of every object in the image is produced. and Break Nodes

The "Ultimate-thin", recursively performs binary image thinning on the displayed binary image until the ultimate skeleton of every object in the image is produced. and Break Nodes![]() Pattern matches all of the objects in the displayed binary image to remove pixels which are located at the intersection of two or more lines. Break Nodes should be used after the Thinning, Skeletonize and Prune operators, which have produced a network of one pixel thick lines. Break Nodes separates the connected network of lines into individual line fragments for further processing or measurement. in the Modify Menu. The disconnected one-pixel-thick objects are processed to measure the distance between the two ends of the skeleton, and the straight line distance is recorded. This measurement is used to compute Tortuosity

Pattern matches all of the objects in the displayed binary image to remove pixels which are located at the intersection of two or more lines. Break Nodes should be used after the Thinning, Skeletonize and Prune operators, which have produced a network of one pixel thick lines. Break Nodes separates the connected network of lines into individual line fragments for further processing or measurement. in the Modify Menu. The disconnected one-pixel-thick objects are processed to measure the distance between the two ends of the skeleton, and the straight line distance is recorded. This measurement is used to compute Tortuosity![]() The degree to which an elongated object curves relative to its axis. The object must have been processed by Skeletonize and Break Nodes in the Modify Menu . The disconnected one-pixel-thick objects are processed to compute the ratio of the curved and the straight line distance between the two end points..

The degree to which an elongated object curves relative to its axis. The object must have been processed by Skeletonize and Break Nodes in the Modify Menu . The disconnected one-pixel-thick objects are processed to compute the ratio of the curved and the straight line distance between the two end points..

Note: If the objects in the image are not one pixel thick, the results will be unpredictable. For example, a circular object has no endpoints and will have an Endpoint Length of zero.

Perimeter

The distance around the edge of an object, including holes. An allowance is made for inside corners, so they are counted as rather then 2.0. An object with an area of 1 will have a perimeter of 4.0.

If only the exterior perimeter is required, and the objects do have holes, use the Fill Holes![]() The effect of the Fill Holes operator is to fill any internal holes in the binary image. option in Modify.

The effect of the Fill Holes operator is to fill any internal holes in the binary image. option in Modify. ![]() More

More

Note: In order to compare the perimeter between objects in different images, it is important that the images are of similar magnifications. The magnification of an object, especially one with an irregular boundary can significantly change the amount of detail visible. The more detail in the boundary, the longer the distance around its edge, and the larger the Perimeter measurement. Objects in the same image can be directly compared.

Perimeter can be used as an estimate of the curvilinear length of lines that are one pixel thick, (after Skeletonize). The length of the line is measured twice along its length and is equal to the Perimeter divided by two.

The distance around the edge of an object can be measured in several ways. One way of estimating perimeter is the Boundary measurement. Another method measures the distance from each pixel to the next around the edge of the object using a one pixel increment for straight lines to the pixel neighbors up, right, down and left, and a Root Two increment for diagonal lines to the four neighboring corners. The result is a Perimeter measurement that takes full account of the eight-way connectivity of pixel neighbors.

| PERIMETER = Horizontal pixels + Vertical pixels + 1.4142 * Diagonal pixels |

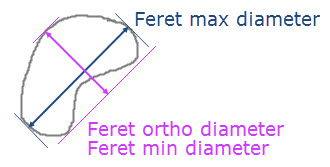

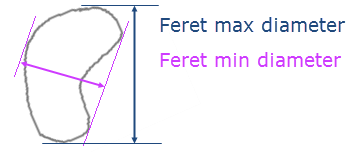

Feret Min Diameter

The smallest Feret diameter measured.

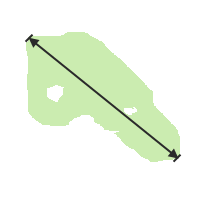

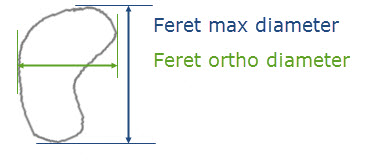

Feret Max Diameter

The largest Feret diameter measured.

Feret Ortho Diameter

The Feret diameter orthogonal to the Max Feret diameter.

Feret Mean Diameter

The average Feret diameter of all measured angles.

Roughness

1.0 - infinity. The roughness of an object.

| Roughness = Perimeter / Convex Perimeter |

Convex Perimeter

The approximate perimeter of the convex hull of an object.

Convex Area

The approximate area within the convex hull of an object. Holes do not affect this measurement.

Volume

The effective spherical volume of an object, estimated from its area.

Assumes a fairly round object.

| Volume = 4/3 * pi * (FILLED DIAMETER / 2 ) ^ 3 |

Radius Min

The minimum distance from the center of gravity to the boundary of the object.

Radius Max

The maximum distance from the center of gravity to the boundary of the object.

Runs Count

The number of pixel-scan runs the object is comprised of.

Chained Pixels

The pixels that are part of all chains of the object.

This object measurement will return the count of pixels in all chains of the object. This is effectively the number of pixels on the border of the image, given the current lattice mode of the blob.

![]()

Object Shape Measurements

Object shape measurements are a dimensionless numerical value used for quantitative comparison. These measurements are composed of a combination of size measurements were the units have been canceled out. ![]() Click to display measurements.

Click to display measurements.

Elongation

Length to breadth ratio of a long thin object.

| Elongation = Length / Breadth |

Feret Elongation

Estimation of elongation based upon the Feret max diameter and Feret min diameter.

Feret Aspect Ratio

Estimation of the aspect ratio based upon the Feret max diameter and Feret orthogonal diameter.

Compactness

1.0 - infinity. The greater the value, the more convoluted the object shape is.

Roundness

0.0 - 1.0. The closer to 1.0, the rounder the object is.

| Roundness = (4 * ϖ * AREA) / (PERIMETER) ^ 2 |

Note: Due to the digital nature of imaging measurements, the Perimeter of a single pixel is theoretical, and so measurements of very small objects (only a few pixels) may produce Roundness values that are significantly higher than 1.0.

Tortuosity

The ratio of the straight length of a line to its curved length. The object must have been processed by Skeletonize and Break Nodes in the Modify Menu . The disconnected one-pixel-thick objects are processed to compute the ratio of the curved and the straight line distance between the two end points.

A Tortuosity measurement close to 1.0 will indicate that the object was very straight, where the Endpoint Length is close to the curvilinear length. A Tortuosity of 0.5 indicates a very curved object, such as a semicircle, or a horseshoe.

Note: If the objects in the image are not one pixel thick, the results will be unpredictable. For example, a circular object has no endpoints and will have an Endpoint Length of zero, so the Tortuosity will be wrong.

| Tortuosity = (Endpoint Length / (Perimeter / 2)) |

Object Intensity & Color Measurements

Intensity measurements are used to characterize and quantify pixel brightness values. ![]() Click to display measurements.

Click to display measurements.

Transmittance %

The ratio of transmitted intensity to the maximum intensity given as a percentage.

For example, an object with 0% transmittance will have a mean gray level of zero.

Note: For color images, this is based upon the HSL color space definition of what luminosity is.

Absorbance

The estimated Optical Density (Absorbance), in OD units.

Absorbance values typically found from TV camera images are between 0 and 1.7, with a maximum of 2.4. To achieve higher Absorbance values, a densitometer may be necessary.

| Absorbance = Log10 (1 / Transmittance) and calibrated in Optical Density (OD) units |

Min Gray

The minimum gray intensity of the object.

Max Gray

The maximum gray intensity of the object.

Mean Gray

The average gray intensity of each pixel in the object.

Total Gray

The sum of the gray levels of each pixel in the object.

The largest Total Gray value that can be measured does have a limit, 16777215, which can be reached if a very large, very bright object is measured. If this is the case, invert the gray image in Enhance, and measure the Total Gray using the opposite intensity.

Note: Total Gray can be used as an indicator of how optically dense objects are, especially if their size is uniform or if the size and intensity combination is useful. If it is necessary to relate objects strictly on the basis of average intensity, and they have different sizes, use the Mean Gray measurement. Gray level intensity measurements can be computed from RGB color images using part of the HSL (Hue/Saturation/Luminosity) transform.

Sdev Gray

The standard deviation of gray intensity of the object.

Var Gray

The statistical variance of gray intensity of the object.

Sum Square Gray

This is the total of all of the squared gray intensities of the object.

Range Gray

The range of gray intensities in all pixels of the object.

Mode Gray

The most frequently occurring gray intensity of the object.

Mode Freq Gray

The frequency of the statistical mode gray intensity of the object.

Ratio FN

Mean gray of ratio image (image field #N divided by Live image field).

Delta Mean Gray

The mean gray of Field #N - Field (#N-1).

Mean Hole Gray

The mean gray intensity of the holes in an object.

Total Hole Gray

The total of all gray intensity of the holes in an object.

Ratio of Means Im1Im2

Mean gray of object in image 1 divided by mean gray of the same object in image 2.

Mean of Ratios Im1Im2

Mean gray of ratio image (image 1 divided by image 2).

Ratio of Means Im2Im1

Mean gray of object in image 2 divided by mean gray of the same object in image 1.

Mean of Ratios Im2Im1

Mean gray of ratio image (image 2 divided by image 1).

Ratio of Means Im3Im4

Mean gray of object in image 3 divided by mean gray of the same object in image 4.

Mean of Ratios Im3Im4

Mean gray of ratio image (image 3 divided by image 4).

Ratio of Means Im4Im3

Mean gray of object in image 4 divided by mean gray of the same object in image 3.

Mean of Ratios Im4Im3

Mean gray of ratio image (image 4 divided by image 3).

Mean dose

The average calibrated intensity using the non-linear calibration.

Total dose

The total calibrated intensity using the non-linear calibration.

Mean Hue

The average hue of the object in an RGB image.

Mean Sat

The average saturation of the object an RGB image.

Min Lumin

The minimum luminosity of the object an RGB image.

Max Lumin

The maximum luminosity of the object an RGB image.

Mean Lumin

The average luminosity of the object an RGB image.

Total Lumin

The total of all luminosities of the object an RGB image.

Sdev Lumin

The standard deviation of luminosity of the object an RGB image.

Var Lumin

The statistical variance of luminosity of the object an RGB image.

Sum Square Lumin

The total of all squared luminosities of the object an RGB image.

Range Lumin

The range of luminosities in all pixels of the object in an RGB image.

Mode Lumin

The statistical mode luminosity of the object an RGB image.

Mode Freq Lumin

The frequency of the most frequently occurring luminosity in the object in an RGB image.

Mean Hole Sat

The average saturation of the holes in the object in an RGB image.

Mean Hole Hue

The average hue of the holes in the object in an RGB image.

Mean Hole Lumin

The average luminosity of the holes in the object in an RGB image.

Total Hole Lumin

The total of all luminosities of the holes in the object in an RGB image.

Min Red, Green or Blue

The minimum red, green or blue intensity of the object.

Max Red, Green or Blue

The maximum red, green or blue intensity of the object.

Mean Red, Green or Blue

The average red, green or blue intensity of the object.

Total Red, Green or Blue

The total of all of the red, green or blue intensity of the object.

Sdev Red, Green or Blue

The standard deviation of red, green or blue intensity of the object.

Var Red, Green or Blue

The statistical variance in red, green or blue intensity of the object.

Sum Square Red, Green or Blue

The total of all of the red, green or blue intensities of the object.

Range Red, Green or Blue

The range of red, green or blue intensities in all pixels of the object.

Mode Red, Green or Blue

The most frequently occurring red, green or blue intensity in the object.

Mode Freq Red, Green or Blue

The frequency of the most frequently occurring red, green or blue intensity in the object.

Mean Hole Red, Green or Blue

The average red, green or blue intensity of the holes in the object.

Total Hole Red, Green or Blue

The total of all of the red, green or blue intensities of the holes in the object.

Correlation RG

Pearson's correlation between intensity levels of red and green.

Correlation RB

Pearson's correlation between intensity levels of red and blue.

Correlation GB

Pearson's correlation between intensity levels of green and blue.

Colocalization RG Red

Contribution of red to the colocalization of red and green.

Colocalization RG Green

Contribution of green to the colocalization of red and green.

Colocalization RB Red

Contribution of red to the colocalization of red and blue.

Colocalization RB Blue

Contribution of blue to the colocalization of red and blue.

Colocalization GB Green

Contribution of green to the colocalization of green and blue.

Colocalization GB Blue

Contribution of blue to the colocalization of green and blue.

Overlap RG

Overlap between intensity levels of red and green.

Overlap RG Red

Contribution of red to the overlap of red and green.

Overlap RG Green

Contribution of green to the overlap of red and green.

Overlap RB

Overlap between intensity levels of red and blue.

Overlap RB Red

Contribution of red to the overlap of red and blue.

Overlap RB Blue

Contribution of blue to the overlap of red and blue.

Overlap GB

Overlap between intensity levels of green and blue.

Overlap GB Green

Contribution of green to the overlap of green and blue.

Overlap GB Blue

Contribution of blue to the overlap of green and blue.

Ratio of Means RG

The ratio of mean red to mean green intensities of the object.

Ratio of Means RB

The ratio of mean red to mean blue intensities of the object.

Ratio of Means GR

The ratio of mean green to mean red intensities of the object.

Ratio of Means GB

The ratio of mean green to mean blue intensities of the object.

Ratio of Means BR

The ratio of mean blue to mean red intensities of the object.

Ratio of Means BG

The ratio of mean blue to mean green intensities of the object.

Mean of Ratios RG

The mean of the ratios of all red to green intensities (pixels) of the object.

Mean of Ratios RB

The mean of the ratios of all red to blue intensities (pixels) of the object.

Mean of Ratios GR

The mean of the ratios of all green to red intensities (pixels) of the object.

Mean of Ratios GB

The mean of the ratios of all green to blue intensities (pixels) of the object.

Mean of Ratios BR

The mean of the ratios of all blue to red intensities (pixels) of the object.

Mean of Ratios BG

The mean of the ratios of all blue to green intensities (pixels) of the object.

Object Position Measurements

Positional measurements are used to collect spatial information as well as determining object location (absolute and relative positions). ![]() Click to display measurements.

Click to display measurements.

Centroid

Centroid is useful for identifying the position of objects and can be used for spatial distribution statistics.

- Centroid X - center horizontal coordinate of the bounding box of the object.

- Centroid Y - center vertical coordinate of the bounding box of the object.

Center of Gravity

The CofG measurements are computed from the boundary coordinates and yield a more accurate location than Centroid.

- COFG X - center of gravity X coordinate of the object computed from boundary.

- COFG Y - center of gravity Y coordinate of the object computed from boundary.

X Min

The leftmost X coordinate of the object.

Y Min

The first Y coordinate of the object, scanning top to bottom, left to right.

X Max

The rightmost X coordinate of the object.

Y Max

The bottommost Y coordinate of the object.

X Min at Y Min

The first X coordinate of the object, scanning top to bottom, left to right.

X Max at Y Max

The bottommost X coordinate of the object.

Y Min at X Max

The rightmost Y coordinate of the object.

Y Max at X Min

The Leftmost Y coordinate of the object.

First Point X

The X coordinate of the top leftmost point on the object.

First Point Y

The Y coordinate of the top leftmost point on the object.

Feret Min Angle

The angle of the smallest Feret diameter measured.

Feret Max Angle

The angle of the largest Feret diameter measured.

Symmetry Angle

-90° - +90°. The axis of symmetry of the object. It is the angle where the object has the least moment of inertia.

Secondary Angle

-90° - +90°. The angle perpendicular to the Symmetry angle.

Vector to Field

The distance from the center of the object to the center of the field.

Angle to Field

The angle in degrees from the center of the object to the center of the field.

Class Number

The number of the current measurement class being measured. This measurement may be used when data is saved to a file, to allow selection of data based on Class. The Class number is saved in data documents by default.

Field Number

The number of the current field being measured. This measurement may be used when data is saved to a file, to allow selection of data based on Field. The Field number is saved in data documents by default.